The Mobile for Reproductive Health (m4RH) digital health program is an automated, interactive and on-demand short message service (SMS, or text message) system that provides simple, accurate and relevant reproductive health information. FHI 360 led development of and piloted m4RH in 2009 in Kenya and Tanzania as one of the first platforms to take advantage of the increasing ubiquity of cell phones to put accurate family planning information for decision making directly into the hands of those who need it. m4RH is still operational in Tanzania.

When users access m4RH, the system captures the cell phone data producing massive amounts of participant interaction data. During the time m4RH has been operational in Tanzania, more than 400,000 unique users across every district of the country have used the service. In 2018, our research team conducted secondary data analysis of the longitudinal data captured in the system from 127 districts in Tanzania from September 2013 to August 2016. In this post we share what we learned about designing digital health interventions from this analysis, as well as how system-generated data can be analyzed to monitor and improve programming or to evaluate its impact.

What is the m4RH program and what data did we use?

m4RH is designed as a “pull” system, meaning information is provided to users when they request it. Users do this by texting (SMS-ing) an m4RH short code (e.g., 15014) to receive the menu of available content on a variety of sexual and reproductive health topics (e.g., contraceptive methods; family planning clinic locations; role model stories that model positive health attitudes, norms and behaviors). Users then respond with an additional text message of the numerical code that corresponds with the menu item they want to access. Our team specifically designed the program as a pull system, rather than a push system that would send regular timed messages to all registered users, to promote privacy and confidentially. We knew from our formative work that household members and couples often share phones. We also knew that many of our users were youth, and we did not want to put them at risk by sending unrequested messages on sensitive topics to their (possibly shared) phones.

For this study, we extracted data about each request to the m4RH system from system logs, including type of information requested by the user (e.g., contraceptive methods, HIV and STI prevention, sex and pregnancy, and puberty) and date and time of the request. We used descriptive analysis for participant interactions with the system and adapted the conversion funnel framework and indicators to assess and map user journeys through the m4RH system.

What did we learn from users of the digital health intervention?

Informed by previous digital health interventions, our team defined four key dimensions of user engagement to explore in our secondary analysis: environment, interaction, depth, and loyalty. We learned quite a lot from this exercise and found looking back at the user data very encouraging!

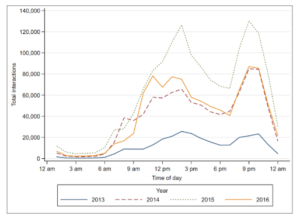

409,768 new users engaged with m4RH over the evaluation period (interaction). We have included a figure on the right from our article that illustrates the time of day users accessed the program. We also know that users engaged with the program for a duration of 64 minutes on average.We found use trends remained fairly consistent (loyalty) over the evaluation period – demonstrating the continued relevance of an SMS-based platform, even in the face of increasing smart phone penetration. We were especially pleased to see that close to 40% of our users were return users and that our active use rate (the number of users who accessed specific menu content after activating system content) was near 90% overall.

While data on activated users demonstrates a high level of interaction and loyalty – only 50% of users moved beyond the main menu (depth). Messages about family planning methods were the most popular, even after the introduction of information about other sexual and reproductive health (SRH)-related topics in later years.

We did find that some aspects of the m4RH program were not so popular – for example, less than 12% of users accessed our clinic locator data base – with users in urban areas more likely than those in rural areas to explore this topic (environment).

How can we use what we learned?

Findings from our analysis of user data have important implications for future m4RH program design and scale up. Below, we provide two such interesting implications from our analysis.

First, we learned that users were sometimes bypassing the main menu when re-accessing specific messages, despite our team conducting extensive user testing of the menu flow during the design phase of m4RH. It seems that users simply memorized the codes associated with the particular message!

This bypassing had implications for our analysis, as well as for future program design. The finding also points to the importance of continuous user-centered design approaches, as user behavior changed over the course of m4RH implementation. In the future, we must identify a design option that both maintains the intention of menu-based design and is responsive to the user behavior patterns we identified through the analysis of our data.

Second, many of our findings have important implications for the adaptation and scale up of m4RH in new locations. For example, we developed the clinic locator database on the assumption that providing knowledge about where contraceptive services are offered might increase uptake. However, as noted above, very few users accessed this information. Perhaps users already know where clinics are located but would rather know other users’ opinions of the health services offered there, or maybe the clinic locator is only relevant for users in urban areas (since that is where we saw the greatest number of users accessing this content). Whatever the reason, users did not seem interested and more information is needed about the specific type of clinic information users may need – if they need any at all.

The system-level data generated by the m4RH program was dense and the dataset was large. We had over 4 million system queries before cleaning. Identifying meaningful measures was a long and complex process – and very few published articles on secondary data analysis of digital health programs existed for us to draw upon. We were honestly not sure how useful the process would be. Yet, this vast collection of data provided us with a unique opportunity to garner deeper insight into user engagement, programmatic strengths, and areas that need improvement to maximize efficacy. We learned much more than we thought we would and are excited to share our process as a model that other digital health programs can replicate.

You can learn even more about our m4RH study results in FHI 360’s Research Roundtable video series.