Research is a conversation. Researchers attempt to answer a study question, and then other groups of researchers support, contest or expand on those findings. Over the years, this process produces a body of evidence representing the scientific community’s conversation on a given topic. But what did those research teams have to say? What did they determine is the answer to the question? How did they arrive at that answer?

Systematic reviews apply a rigorous methodology for identifying and synthesizing evidence on a research topic, which may include a variety of social and health-related subjects. (Check out these useful resources and libraries from the Campbell Collaboration and Cochrane Collaboration.) The goal of a systematic review is to examine the literature in a way that is: 1) exhaustive, 2) reproducible and 3) objective in the interpretation of findings. Developing a systematic review protocol requires thoughtful decision-making about how to reduce various forms of bias at each stage of the process. Below we discuss some of the decisions made to reduce bias in our systematic review exploring girls’ math identity, in the hopes that it will inform others undertaking similar efforts. In particular, we designed our protocol to address source selection bias, publication bias, construct validity, reviewer bias and conclusion bias.

Planning our systematic review was a collaborative process requiring insights from both content and methodological experts. As a team, we identified potential areas of bias, created strategies for improving the validity of the review itself, and developed a detailed protocol (available here) to structure our efforts. This process will ultimately strengthen our ability to successfully summarize the evidence and provide insights on how to effectively foster girls’ interest and engagement in math and related fields.

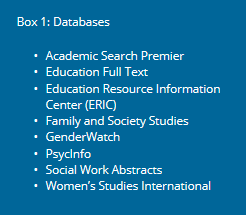

One of the first sources of bias addressed was source selection bias, which can arise if researchers select an inappropriate assortment of sources. Articles should be selected from multiple databases representing literature across all relevant disciplines. For example, our review draws from literature in the education, sociology, psychology and gender fields (see Box 1). Incorporating literature from various disciplines improves the likelihood of capturing differing, equally important perspectives on a topic, thereby eliminating “group think” or the echo chamber effect. For example, education experts may examine the review question from a pedagogical standpoint, whereas gender experts may assess societal expectations of girls versus boys when it comes to excelling in math.

Related to source selection bias is publication bias, which describes a situation where research is not representative of all studies on a topic due to standards for publishing in peer-reviewed journals. For example, it is challenging to publish null findings from a study, which may mean the peer-reviewed literature generated from a database search has a positive bias, or tends to show more favorable study results. One solution we applied was to include high-quality grey literature – or non-peer-reviewed articles – to complement the database search. As a primary source for high-quality grey literature, we elected to use Academic Search Premier as a database, which hosts graduate dissertations and educational reports in addition to peer-reviewed articles. We also plan to conduct hand-searches, or reviews of reference lists of relevant systematic reviews or meta-analyses identified through our search process. We will incorporate any peer-reviewed articles and grey literature of relevance that were missed through the database search.

Before executing the database search, however, key concepts were clearly defined. In order to reduce threats to construct validity in the systematic review, the included studies must measure key concepts the way the review team defined them. For example, our review built consensus grounded in theory on a definition of girls’ math identity, i.e. girls’ beliefs, attitudes, emotions, and dispositions about math and their resulting motivation to engage and persist in related activities. We then mapped out the various ways this concept may be captured and operationalized across disciplines. Our team eventually agreed on the following list of terms in their various forms: identity, perception, attitude, disposition, belief and self-concept.

Our process was strengthened through the support of a reference librarian, who provided guidance on which terms may or may not be appropriate to include in different database searches. For instance, we hoped to use the term “STEM” as the acronym for the fields of science, technology, engineering and math. STEM is widely recognized and often not spelled out in research. However, in certain databases, this term created significant noise, pulling in irrelevant articles regarding stem cells and brain stems. The process of creating a list of search terms that adequately represents key concepts while also eliminating unwanted articles was iterative and relied heavily on the combined knowledge of content and methodological experts.

By using at least two reviewers, the team can assess inter-rater reliability, or the extent to which reviewers consistently agree on which articles should be included versus excluded. We conducted a pilot training, where all reviewers assessed the eligibility of 20 articles. After each person screened the articles by title and abstract, the team discussed the group’s decisions to move articles to the next stage. This pilot was an opportunity for lead investigators to train reviewers on key concepts and criteria, while also clarifying written procedures to ensure reproducibility.

As described, many steps can be taken to improve the validity of the review itself. Yet, this is not an exhaustive list of types of bias and the approaches for addressing each. The role of the reviewer is to be cognizant of these challenges and provide a transparent approach for addressing them. In this way, systematic reviews can foster even greater dialogue on a particular topic, including evidence-based approaches for nurturing girls’ interest and engagement in STEM-related fields. If you have or know of a study on this topic that is in the grey literature or in process, please alert us to it in the comments or by sending us an email.

Photo credit: Jessica Scranton/FHI 360