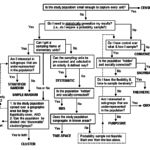

So you want a probability sample of households to measure their level of economic vulnerability; or to evaluate the HIV knowledge, attitudes, and risk behaviors of teenagers; or to understand how people use health services such as antenatal care; or to estimate the prevalence of stunting or the prevalence and incidence of HIV. You know that probability samples are needed for valid (inferential) statistical analysis. But you may ask, what does it take to obtain a rigorous probability sample?

The answer to your question is simply to stick to the principles for probability samples (see previous blog post on sampling here). However, advising you to simply stick to the principles is not the same as saying that sticking to the principles is simple. Let’s review how the principles of probability samples apply to household sampling:

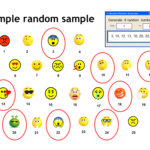

- Which household is selected into the sample is exclusively dictated by chance (i.e., random selection).

- Each eligible household in the entire target population has a chance to be selected. The probability of selection need not be equal, but non-zero and known.

- One should be able to track how many units are selected out of the total number eligible units. This is needed to construct sampling weights. [1]

- Data must be collected in every household selected.

How much you stray from these principles determines how valid your results will be. Validity of results in this sampling context means that data collected from the sample can be used to perform quantitative analyses (e.g., calculate the prevalence of stunting) that can be used to draw conclusions and make recommendations about the entire target population of interest. If, for example, certain portions of the population are consistently missed in your sampling approach, the results from the sample will be biased, hence validity is threatened.

↑ BIAS = VALIDITY ↓

A common approach when sampling households is to first sample enumeration areas (EAs) or census tracks as defined by the local census bureaus. This approach is very convenient because EAs are defined by the bureaus to make the census manageable. They tend to be of similar size (approximately 200 households); they are updated in preparation for the census and other surveys conducted by the bureaus; they are mapped; and important information, such as population sizes, rural/urban designation, and socioeconomic status classification about each EA, is available. This all sounds great until we’re faced with the realities on the ground.

In a recent study my colleagues and I conducted in East Africa to evaluate a nutrition program, we planned to select EAs as usual, but found that the national agency that manages the EAs was preparing for the census. This demand on their time was affecting sampling services to the public, and we were unable to obtain the necessary information regarding the EAs.

A second challenge we faced in our study was that some selected areas became inaccessible due to weather conditions and one area had problems with border unrest that made it unsafe to survey. The most scientifically valid approach would have been to wait until the situations in these areas improved, but waiting too long would have put an undue strain on resources. So we replaced these areas with otherwise similar areas, but that were safe to access. This is a common approach, but one that threatens the validity of the sample. The equivalence of the replacements to the originally selected areas can never be verified and computing correct selection probabilities may be difficult. Random selection of reserve samples (a random sample of households selected at the time of the original selection, but held in reserve until replacement households are needed) is sometimes implemented to alleviate this problem, but they can’t ensure the equivalence of the replacement units either. Validity threat not averted 🙁 .

To assess the impact of the replacement approach to our results we plan to conduct sensitivity analyses by looking at the study results with and without the replaced areas. This will simply be an assessment to help us understand the effect of the replacements though, not a solution to the threat to validity.

A more rigorous approach may be to exclude areas that we know will be difficult to survey prior to selection, for example, remote and sparsely populated areas. However, this is only rigorous in that we can more cleanly track the selected units from the feasible areas and focus our findings on the sampled population. In other words, the target population is redefined. Findings must be explicitly applied to the new target population instead of incorrectly assuming that the whole population was represented. Such a caveat is something that can be clearly addressed as a limitation in the generalizability of the results.

Other problems one can face with the selected areas is that they are much larger or much smaller than expected due to changes in the population since the information was last updated. If larger, one could implement a segmentation approach for selecting smaller areas within the larger selected area, which does not threaten validity. If smaller, researchers sometimes like to select additional EAs. This latter practice can mess up the probabilities of selection, which could threaten validity if probabilities of selection are not properly computed and used in the analysis. Allowing a reduction in the sample size may be a better option than selecting additional units ad hoc.

In Part II, I’ll move on to the challenges we may face in the selection of households within the EAs or segments while continuing to emphasize the sampling principles. Stay tuned!

[1] Note: Even in self-weighted designs, where the probability of selection for each household is uniform, tracking the information to compute weights is useful for validation and documentation purposes. Also, sampling weights that were planned to be uniform may end up needing adjustments due to sampling implementation issues.