By: Annette N. Brown with Katherine Whitton

The challenges of human development are complex, but researchers are trained to be specialists. They specialize in academic fields, such as economics and anthropology, and often specialize in sectors, such as education and health. To adequately address some of the most pressing questions, researchers either need to expand their own specializations or collaborate. Both actions, however, are inhibited by different publication practices across different disciplines, especially the differences between public health and the social sciences.

In a companion post, I present some research I conducted with Katherine Whitton’s assistance comparing public health vs. social science publication practices using data from 3ie’s Impact Evaluation Repository (hereafter the IER). That post presents the results for authorship, looking at number of authors, gender of authors and authorship by income status of country of primary affiliation. In this post, I present the results for publication lags and argue that we need to address the divergent publication practices between public health and social science.

Methods

The companion post provides more details about the methods, but here is a quick summary. The IER is a repository of impact evaluations conducted on interventions, programs and policies in low- and middle-income countries. We drew a random sample of just over 400 studies from the population of studies created by the 2015 comprehensive update of the repository. Development impact evaluations provide a useful sample of research to compare public health and social science publication practices since the disciplines are both heavily involved in, and use similar methods for, these kinds of studies. The sample has roughly an equal number of publications for the two disciplines and covers the years 2000 through 2014.

We manually coded the studies in the sample for month and year of publication or posting and month and year of the end of end-line data collection. We are interested in how long it takes for the findings to be publicly available after the end of data collection, which we call publication lag. We measure publication lag in months. If an article was published by the journal online before print, we coded the date it was published online.

We coded the type of publication outlet, and for this analysis we created a binary variable that distinguishes journal articles from other outlets including working paper series, institutional reports and dissertations. We also coded each publication source (e.g., journal, working paper series, institution) as being either health or social science. There are no health publication sources that are not journals, meaning that all the non-journal publications fall within social science.

Findings

Before I present the findings on publication lags, I think it is interesting to note the comparison of missingness. We scoured the full texts and supplementary materials (if provided) for the studies in the sample to find information about when end-line data were collected. Forty-four of the studies (roughly 10% of the sample) provided no information. Of these, three-quarters (33) are articles in public health journals, which greatly exceeds the proportion of public health journal articles in the sample. I was surprised (and dismayed) at these findings. How are data collection dates not specifically mentioned in the CONSORT checklist? It is hard to imagine any intervention in a low- or middle-income country for which the timing could be assumed to have no relevance whatsoever to understanding the context for findings.

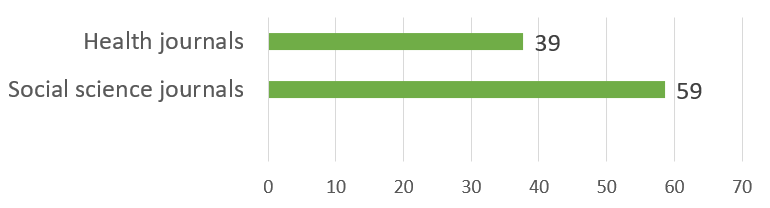

Figure 1 shows the average publication lag for the studies in the sample comparing those published in public health journals to those published in social science journals.

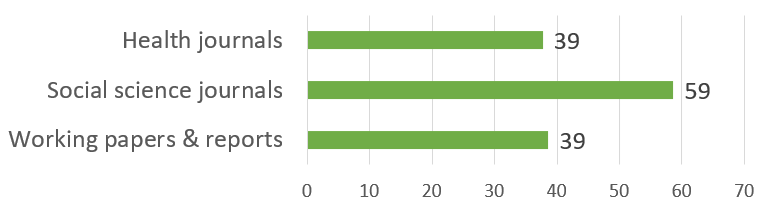

For articles in health journals, the mean lag is a bit over three years (median 36 months) with a minimum of four months and a maximum of 139 months. For development impact evaluations published in social science journals, the mean lag is 59 months (median 54 months) with a minimum of eight months and a maximum of 184 months. That’s an average for social science of almost five years! The social scientists reading this are now exclaiming, “but we get our findings out much faster than five years by posting working papers.” Good point. We look at that too. Figure 2 compares average publication lags now including working papers and reports.

The distribution of publication lag for working papers and reports is more skew than for the others – the average is 39 months, but the median is just 28 months with a minimum of two months and a maximum of 180 months. One clarification for understanding the maximum is that the IER typically captures the latest version of a working paper, so for those working papers at the high end of the range, there may have been earlier versions that had shorter publication lags.

Based on this sample, it looks like social scientists are making their findings public as fast as public health researchers but as working papers. The journal articles are coming almost two years later. Does this matter?

Discussion

Working papers

In addition, a public working paper allows a researcher to lay claim to a research question or finding in advance of the (sometimes long) process of journal publication. Allaying the fear of being scooped can help improve the quality of the final article because it lets researchers take more time to confirm the robustness of their findings and revise their manuscript before journal submission.

Sullivan also makes the argument, as many others have, that working papers allow researchers to provide valuable findings to policymakers and program managers faster. But this begs the question, faster than what? If we look back to the comparison in figure 2, the answer is, faster than publishing in social science journals but not faster than publishing in public health journals.

Journals

Public health researchers, on the other hand, have strong incentives to complete their steps in the publication process quickly, because if these researchers want to publish in a journal at all, they have to publish in a journal first. Most public health journals refuse to review manuscripts that present findings that have already been made public, and even when a manuscript is accepted, the journals “embargo” the findings until publication. To the extent that public health researchers and journals want research findings to be available for citation and use, both have incentives to move quickly in the publication process.

While the evidence in figure 2 does not necessarily imply that social science journals take longer than public health journals to publish, anecdotal evidence does suggest that social science journals on average have slower turnaround times than public health journals. (Although there is increasing competition, at least among economics journals, to reduce turnaround times, especially through liberal use of desk rejection. See one analysis here.)

Suppose that working papers exist to compensate for the slowness of social science journals, then isn’t it a good thing that working papers make social science findings publicly available just as quickly as public health findings? In my opinion, no, because the findings presented in working papers are rarely final and may change in meaningful ways.

Final results?

I remember a research seminar several years ago in which the speaker was presenting a draft paper with potentially important findings using end-line data that were already five years old. I asked about that time lag. The speaker responded that the team hasn’t submitted a manuscript yet because one of the co-authors is “famous” and didn’t have time to help finish the paper. The speaker then clarified that the team presented the results of the study to the government of the country where the research was conducted immediately after they collected the end-line data. Huh? We were in a room full of thoughtful researchers who were giving great feedback on the analysis – feedback that, if followed, would improve the analysis and could alter the findings. I could only wonder why a journal deserved a better version of the research than the government of the country being studied? Or, if the team did consider the results to be final when they presented them to the government, why were we wasting our time giving feedback?

Berk Özler makes very similar arguments in his oldie-but-goodie post “Working papers are NOT working.” He gives examples from his own research where the findings evolved over successive working paper versions, but people continue to cite the earlier, erroneous, findings. Özler notes that without working papers, though, social science journals would need to work faster. He also suggests that an advantage of not having working papers is that we could go back to double-blind journal reviews.

Results embargoes

The straight-to-journal practice for public health publishing, however, puts immense stock in the quality of double-blind peer review. It assumes that each selected referee is qualified to assess every element of a study, spends the necessary time to carefully review and reviews without bias in spite of her or his anonymity. The straight-to-journal practice also severely limits the number of eyes (even counting four eyes for most researchers) on a manuscript before it is published, at which point it is essentially written in stone. Although science is supposed to provide continued “peer review” after publication, replications and retractions are extremely rare. Many have written about the problems with peer review; here is one critique, “Let’s stop pretending peer review works” and another, “Peer review is not scientific.”

The embargo culture in public health can also delay important findings from becoming public even longer than the journal publication timeline. This delay happens when researchers want to publicize their findings at scientific conferences. These conferences also want to be first to make the results public, so although scientists often already have results when they submit abstracts (conferences prefer abstracts with results), they cannot publish or post the results until the conference happens many months later. It is even more complicated when researchers need to coordinate the journal and the conference so that the results come out in both at the same time. In all this coordination to appease the embargo policies of the journals and the conferences, the interests of the policymakers who might use the results can easily be overlooked.

Regardless of the relative costs and benefits of the two approaches, I think it is detrimental to continue to have such divergent publication practices between public health and social science. It hinders researchers from working and publishing outside of their fields, and it impedes collaboration – collaboration that can improve the relevance and quality of research in our complex and complicated world. In addition, the lines that journals draw are not always clear in advance, creating costly uncertainties. One blogger tells the story of how a working paper posted on a co-author’s employer’s website (with a tiny number of hits) caused the manuscript to be rejected by a journal after a long revise-and-resubmit process. I read a recent Twitter thread about a similar situation, in which the editor’s rejection letter to the author complimented the anonymous referee for doing a Google search to find the working paper. As noted by many Tweeters, so much for the editor and reviewer honoring double-blind review!

Let’s converge!

There are also the ethical questions of when and how to notify study participants of the findings. But again, the importance of notifying study participants is no more specific to public health than the possible sensitivity of study results. Getting the notification process right across all fields of research where humans are involved should be part of converging publication practices.

At the same time, I think the social sciences need to move away from the current working paper model, where authors post successive draft variants of a paper over multiple years before getting it published in a journal. One solution is to adopt an open peer review process through which a journal ties the open review and publication process together by posting the manuscript submission as a working paper and then posts the referee reports and subsequent author revisions. An example is Gates Open Research, where the format of the citation changes as the article progresses through the review process. Another example is Economics: The Open-Access, Open-Assessment E-Journal. I prefer their model to that of Gates Open Research, because Economics E-Journal makes a clear differentiation between discussion papers and published articles.

I also think that funders’ open data policies, especially when tied to the date of end-line data collection, will help reduce the time that studies languish as working papers. Authors have an incentive to publish more quickly when their data will soon be, or already are, public. And as more authors have this incentive, they will use their voice and their journal selections to put pressures on journals to speed up review processes.

Two suggestions

If we had a practice like this in place, then in a situation like the one Özler describes, people could see that the working paper being cited has a relatively low confidence index and would have a reason to (or no excuse not to) look for later versions of the study. Journalist’s Resource advises that “in choosing working papers, journalists should communicate with scholars about the progress of their research and how confident they are in the claims they are making.” A confidence index for paper versions would be a way for authors to openly signal that information, perhaps saving the time of journalists and authors when results really are not ready for prime time.

Conclusion

Social science publication practices are characterized by working papers and long journal publication timelines, while public health publication practices are characterized by limited peer review and results embargoes. Neither is optimal for making high-quality research findings available for use in a timely manner. I think that rather than choose one model, we should strive for convergence, taking the best of both models and getting rid of the worst.

Katherine Whitton performed much of data coding and graphical analysis for this research. I would also like to acknowledge Shayda Sabet, who assisted with a first round of coding for this sample and contributed to early discussions of the analysis. The supplementary materials for this research are available here.

Photo credit: Tigerstock/Freepik