Over the course of my career, I’ve conducted more than 300 individual interviews and over 100 focus group discussions. It’s a favorite part of my job because it connects me with people I might never have met otherwise, and gives me license to ask all kinds of fascinating questions that I wouldn’t typically ask upon a first meeting – about anything from decision-making, fears and desires, to infertility, sex and drugs. It is astounding what people will tell you in the research context if you ask questions with a combination of purpose and genuine interest and then listen carefully, with curiosity and empathy. These are all elements of rapport – the social connection recognized as fundamental to qualitative research – that helps to put the respondent at ease and facilitates the sharing of authentic life experiences and honest opinions. We want to minimize the potential for social desirability bias (the tendency for respondents to say what they think we want to hear or what they perceive to be the “right” answer) by making it clear that we value each individual’s unique experiences and perspectives, regardless of whether they align or diverge from what “other people” might think.

A brief summary of the research project

The Patient-Centered Outcomes Research Institute (PCORI) funded our study specifically to do research on research methods. The main goal of the research was to learn about how the mode of qualitative data collection might affect the data we get. PCORI is interested ultimately in improving the health of patient populations, so we chose a health topic that would affect a broad cross-section of individuals and that would contribute information to a public health problem of interest. At the time, the Zika virus was raising alarm for its devastating effects on a developing fetus, so we chose to focus our research on women’s perceptions of medical risk and vaccine acceptability during pregnancy, in general and as related to the Zika virus and vaccine.

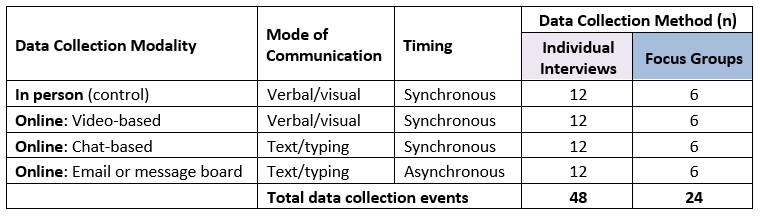

Our study included a total of 171 women who were in between pregnancies. They participated in 48 individual interviews and 24 focus groups that were evenly divided across the four modes of data collection (Table 1). The study was quasi-experimental, meaning that participants were assigned to a mode of data collection in a systematic order, rather than random assignment or according to their preference or convenience. We did randomly assign them to take part in either an individual interview or a focus group. Given that we wanted to compare the data generated by the different data collection modes, we attempted to control for potential confounders by minimizing differences across modalities. Procedures were kept as consistent as possible while in accordance with best practices for each modality, and the data collector (me) and the question guide were identical across all modalities.

Mode of data collection: In person

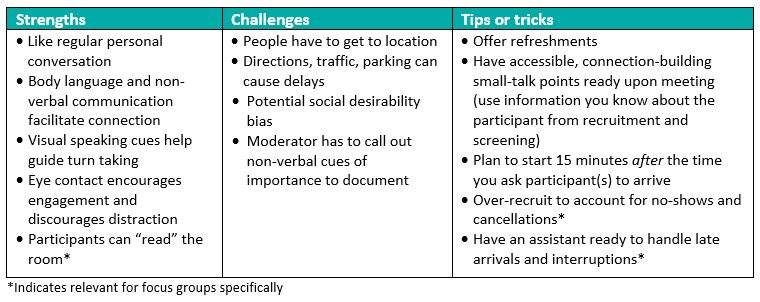

The in-person mode for interviews and focus groups in our study followed traditional qualitative data collection procedures, with the participant(s) and me seated in the same room around a table with a digital voice recorder set up between us. For the purposes of maintaining consistency for the study, we asked women to travel to our office and I conducted all interviews and focus groups in a conference room, with an assistant present for focus groups. But I’ve also conducted in-person interviews in people’s homes, at coffee shops, in clinic spaces, at their offices – wherever is convenient, comfortable and private according to the tastes of the participant, as long as it is also reasonably quiet to facilitate audio recording. Most of the study focus groups took place over the weekend, based on the participants’ availability, which necessitated getting everyone into an otherwise locked office building (with many thanks to my focus group assistant!). As “hosts” of these data collection events, we offered interviewees water or coffee and for each focus group we also provided light snacks, as part of the welcome and rapport building.

Strengths

Challenges

For an in-person meeting of separately located individuals, obviously someone has to travel! That travel adds time and often out-of-pocket cost for either the researcher or the participant, with the potential attendant hiccups of getting lost, having trouble finding parking or navigating public transport, all of which can delay the interview or focus group. Once in the same room, researcher and participant(s) will use the visual cues at their disposal to establish a first impression. This can work both ways for participants, to enhance rapport or to create a feeling of insecurity (e.g., if they perceive a power, knowledge or status imbalance). If rapport is not well-established, the physical presence and perceived relative social position of the researcher may increase the potential for respondents to provide socially desirable answers to questions, or to censor or curtail true feelings. One additional challenge is related to logistics. Throughout the interview or focus group, participants will continue to communicate via non-verbal cues that will not get picked up by the audio recorder. The interviewer or moderator needs to vocalize these responses (e.g., “I think I saw you roll your eyes”) where they are important to make sure this information is captured in the transcript and available during analysis and interpretation.

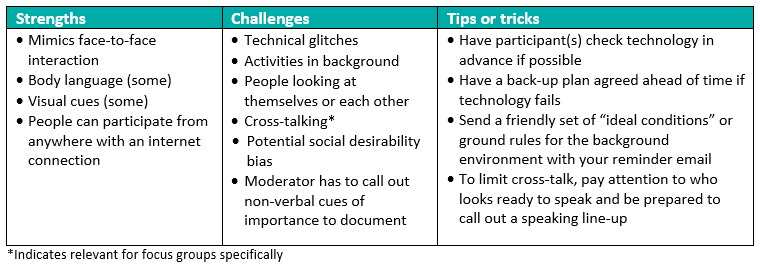

Mode of data collection: Online video

For our study, online video participants used internet-connected computers, at their homes or in another convenient location, to access a private online platform at a designated date and time. The platform supported web-connected video, with audio over a telephone conference line. Participants could see the moderator, other participants (in focus groups), and themselves, retaining some of the potential for non-verbal communication from the in-person mode. In fact, we switched from using the term face-to-face for in-person meetings because some participants felt the online video medium still provided a (sufficient) face-to-face connection. In most cases, I conducted video-based interviews and focus groups from my office, though, consistent with the remote nature of the medium, I also conducted a few weekend focus groups from my home. Securing a do-not-disturb zone away from kids and dogs was paramount in those cases.

There is a range of online hosting platforms available that can support and facilitate online video-based data collection. For the purposes of our study, we solicited bids from a number of market research firms that specialize in online qualitative data collection services and selected the one that provided the best value for the money (in terms of real-time support during data collection; assistance testing and trouble-shooting webcams and equipment prior to data collection; providing links to a secure, date- and time-specific online “meeting room”; facilitating audio recording). We also opted to keep the audio portion of the data collection over a phone line for two reasons. In the case of weak internet connection on someone’s part (mine included), the conversation could continue via phone even if we lost video connection for a few moments. The audio landline connection also facilitated recording the conversation, with arguably better and more-consistent audio quality. In some cases, free video connection platforms like Skype, Zoom or FaceTime may suffice, and some facilitate audio recording as well.

Strengths

Video connections do a fairly good job of mimicking the face-to-face interaction of in-person interviews, transmitting the same types of non-verbal communication that help to facilitate rapport (e.g., smiles, nodding), and they allow participants to see and gather visual details about the researcher and other participants, with the main advantage of obviating the need for travel. All parties can remain at a place of their choosing or comfort to participate in the interview or focus group. For individual interviews in particular, the online video route is very similar to an in-person interview.

Challenges

Since a visual connection between researcher and participant(s) is maintained, online video presents some of the same challenges of in-person interviewing related to the potential for social desirability bias and first (visual) impressions. The moderator also still has to verbalize non-verbal cues of importance for the record. But a primary difference of “face-to-face” interactions via video is what participants can see and do while maintaining a visual connection. With a video feed, a participant can not only see the researcher (and other participants) but usually also herself. The potential for making eye contact with oneself slightly changes the dynamics, as we’re all guilty of checking out our own appearance when our video image is being broadcast. Generally, for individual interviews, having two faces on a screen isn’t an issue.

Finally, a challenge common to all online modes that was particularly an issue for online video is the technology that enables these interactions. Internet (and mobile phone) connections can be weak. Logging in to a site online but connecting to audio via phone is not familiar to many. Batteries lose power. Obviously, none of these are insurmountable issues, but they can add up to inefficient use of time and interrupt the flow of conversation in ways that disrupt data collection.

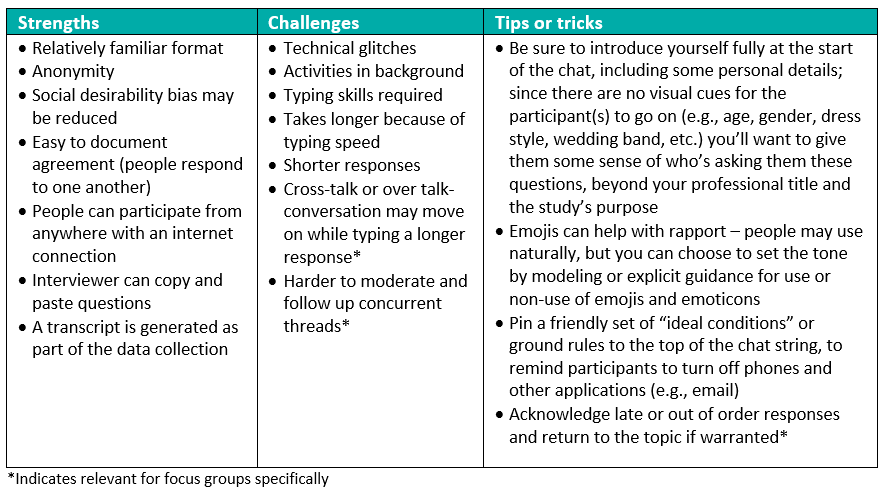

Mode of data collection: Online chat-based

Online chat-based participants in our study also used internet-connected computers to log in to a private online platform at a designated date and time. These activities mirrored the use of instant messaging or participating in a chat room: I typed a question or follow-up and the participants typed their responses, all in real time, back and forth. We conducted focus group sessions chat room style, where respondents could type simultaneously, and participants could see each other’s responses as they were entered. I could ask clarifying questions or follow-up queries immediately after a response was provided.

In this way, the chat-style data collection maintains the synchronous aspect of traditional qualitative research (we’re communicating in real time, over a one- to two-hour period), but shifts the mode of communication from verbal to written or, more specifically, typed. There is some evidence to suggest that removing the visual connection between researcher and participant may reduce social desirability bias and/or increase disclosure and discussion of sensitive topics (something we sought to assess in our study; to be covered in a future blog post). With the ubiquity of texting and instant messaging (at least among our study sample), this was a pretty familiar way of communicating for most women. We did request that participants use a device with a standard keyboard (rather than a phone or touch-screen tablet) to allow faster and more accurate typing, though some would argue they are faster at talk-to-text or thumb-typing on a phone. For my part, I logged into the chat room via my laptop and, again following the remote nature of the data collection, was usually at the office, though sometimes at home.

Strengths

Challenges

A few of the challenges of text-based chat-style data collection are similar to the online video mode, in that there can be issues with the connection technology and the remote nature of the meeting makes it easier for participants to be looking at or doing other things during the discussion. The latter is exacerbated in the anonymous text-based world because there is no way to get a sense, visually, that participant(s) are engaged. The same is true for the participant, who cannot see if the researcher is yawning at her answers and doesn’t even know what this “researcher” person looks like. Emoticons and emojis can help to bridge the gap, for both researcher and participant, to modulate the tone and meaning of written text, though it’s important to strike a balance – you don’t want to end up with a transcript full of ambiguous or superfluous emojis.

The other set of challenges for this mode of data collection relates to typing skill and speed, and subsequently, timing. The average English conversation rate is about 150 words per minute, while average typing speed is around 40 words per minute. This means that communication is s-l-o-w during these types of interviews and focus groups. Participants have to read the question, think about it a moment (or longer) and then type a response. As a result, these interviews, and especially focus groups, tend to either take longer or cover less ground, and responses are typically shorter and less in-depth. It means a lot of waiting time for the researcher, which can feel inefficient. Also, variable typing speeds and response lengths in a focus group mean that what is a synchronous conversation can turn asynchronous quickly. If I follow up on the first, fast, response with a second question, and participants are still typing responses to my initial question, we now have two threads live. It’s a different kind of cross-talk that requires skilled management to make sure everyone feels heard and remains engaged.

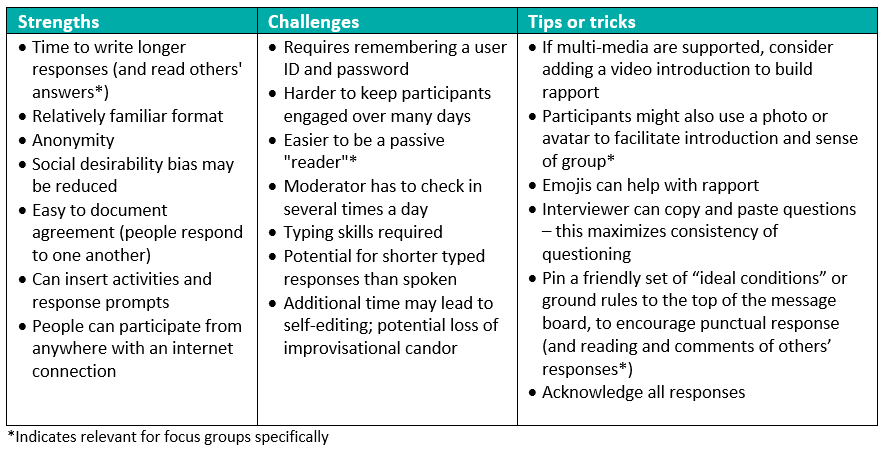

Mode of data collection: Online post-based

For our study, this mode of data collection also relied on typed correspondence but was the only asynchronous approach, where interviewer or moderator and participant were not online at the same time nor responding in real time, making it the most different from the traditional qualitative in-person setting. For interviews, I emailed the participant three to five questions that she could respond to at her convenience, typically within 24–48 hours. My next email then contained follow-up questions based on her responses to the initial questions, along with a set of three to five new questions, and this process was repeated until we’d completed the interview, typically within a week.

The same procedure was followed for asynchronous focus groups, but for this we used a secure message board platform. I posted three to five questions on the discussion board each day over several days. Participants were asked to sign in each day, respond to the questions, and read and comment on each other’s posted responses. After posting the day’s questions, I logged in and reviewed responses several times a day and posted follow-up questions as appropriate, to which participants could again respond. Participants were prompted to complete unanswered questions before moving on to new ones. Most of these focus groups took a little over a week to complete. On the moderator end, use of a dedicated message board platform meant that I could receive a notification each time there was new activity on the board. My response time was usually linked to my schedule as well – if I had time to respond immediately, I did. If I was in the middle of a meeting, or carpool or received the notification on my phone rather than computer, I waited until the next time I was at my laptop and could thoughtfully respond.

Strengths

Challenges

Beyond the shared challenges of technological issues and the need for typing skills detailed above, the primary challenge of an asynchronous data collection approach is that both researcher and participant(s) need to stay engaged over a period of days. While the remote log-in and round-the-clock access to the platform maximizes convenience, sometimes things that are convenient get forgotten or fall to the bottom of the to-do list. And for focus group participants, since there are few connections (visual or otherwise) between themselves and other members of the group, it’s easy to become a passive participant, reading others’ responses and simply chiming in with an “I agree”. This, along with the need to check in several times a day, makes more work for the moderator, both in terms of total time invested (three hours compared to two hours for the other modes in our study) and in managing individual participants within the group. Finally, though participants might take more time to compose their typed responses than in chat-based settings (and this is a possibility more than a probability), they can also use that extra time to “clean up” their response, not just in copy-editing terms; in re-reading their response before hitting “post” they have time to reconsider what they want to share and how they want to present it.

So, what did I learn that I’ll use in future research?

Since this was research on research methods, I did my best to maintain a neutral stance on each mode, adjusting how I set up the social environment and managed the flow of conversation and interactions to maximize rapport and the chances for a productive data collection session. But now that our analyses of the data generated are complete, I can reflect a bit more on the experiential side of collecting all those data, and which approach(es) I might select for my next project. One of my main takeaways from this study is that there is neither a “best” choice nor a “bad” choice for collecting qualitative data in any of the ways I’ve detailed here. Obviously, research resources, timelines, study population, location and internet access are all going to factor in to a decision, but if we acknowledge that and set those constraints aside for a moment, I can share my picks.

For individual interviews, I found the in-person and online video modes to work pretty comparably. Rapport and communication were easy and I had rich and emotion-laced conversations using both approaches. I personally find it a bit easier to build a connection in-person (to be able to, say, pass a box of tissues if needed), but I did also love the convenience of being able to connect for an interview from my desk or my home. The synchronous chat-based online mode of typing back and forth was a distant third. I had to be vigilant about remaining focused during the lag-time between when I posed my question and when the respondent finished typing her response, with those familiar ellipsis dots “…” flashing on my screen. The asynchronous (in this case email-based) back-and-forth approach to interviewing eliminated the what-to-do-while-waiting predicament, but felt a little disjointed, particularly in the way I needed to follow up responses from Day 1 while also posing new questions for Day 2.

Conversely, the asynchronous mode for focus groups, using a message board, would be among my top two picks, just behind in-person discussions. As with interviews, the physical proximity and visual connection of the in-person mode made rapport and communication easy, and interaction between participants was abundant with very little prompting from me. But one of the serious challenges of in-person focus groups is the scheduling. The average number of participants in our in-person focus groups was less than any of the online modes because of this challenge; despite over-recruiting for each group, we were lucky to get five mothers of toddlers together at the same time. But do you know where mothers of toddlers do “gather”? On social media platforms, where someone posts a question or comment and others read that and respond. I think this is what made the message board mode of data collection work well for our study population – it was familiar, it was convenient and everyone could participate on their own schedule. This was true for me as well – and once I had the board set up, much of the question asking was automated, freeing me to focus on checking new responses, posing follow-ups and trying to keep those “passive” participants engaged. The real-time chat-room focus groups suffered from the same typing time lag as the interviews, and technology issues with the internet-video-phone mode outweighed its convenience for me in the online video focus groups.

That said, the optimal approach is the one that works to connect us with the people we want to talk to, and we can make modifications around the edges, as I’ve suggested in the tips and tricks above, to facilitate rapport via any mode. In a future post I’ll share what we learned about how participants viewed the different ways of engaging in qualitative research, to provide another perspective on each of these modes of data collection.

Photo credit: Cavan Images/Getty

1. Quantitative survey researchers, I can hear you gasping and crying “bias!” But think about it – when you ask a closed-ended question on a survey, whether in ACASI or in person, despite pre-testing you may have done, you have no idea 1) how the respondent understands the question, 2) how the respondent is interpreting the question and response options, or 3) how accurately the response options reflect an individual’s experience or opinions. For these reasons, qualitative methods, done well, can offer greater face and content validity and greater internal reliability of data. ↩